Nvidia 2024 AI Event and what it means for biotech

Blackwell and the rest of announcements will hopefully open up the bottleneck for biotech Compute Acceleration

Unless you leave under a rock, you know by know that Nvidia NVDA 0.00%↑ is a dominating force in the world of Compute Acceleration, which includes biotechnology as one of their segments.

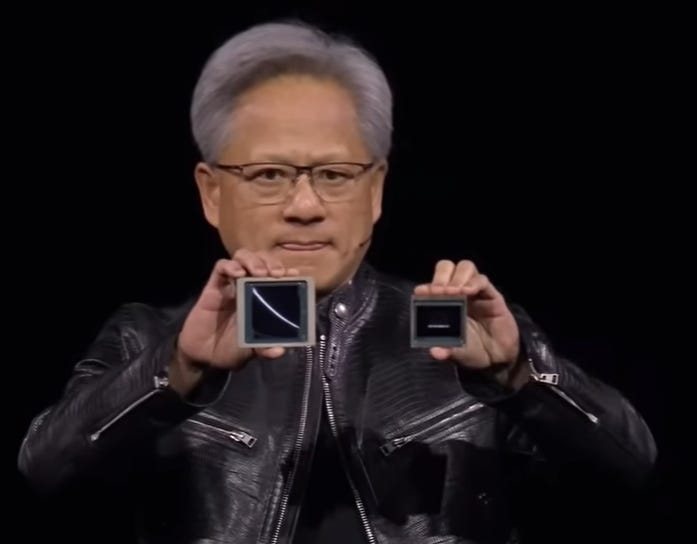

Nvidia had their keynote recently as part of their 2024 AI Event, where they announced Blackwell (left), the next iteration of Hopper (right), the chip that changed the world, according to Nvidia’s CEO.

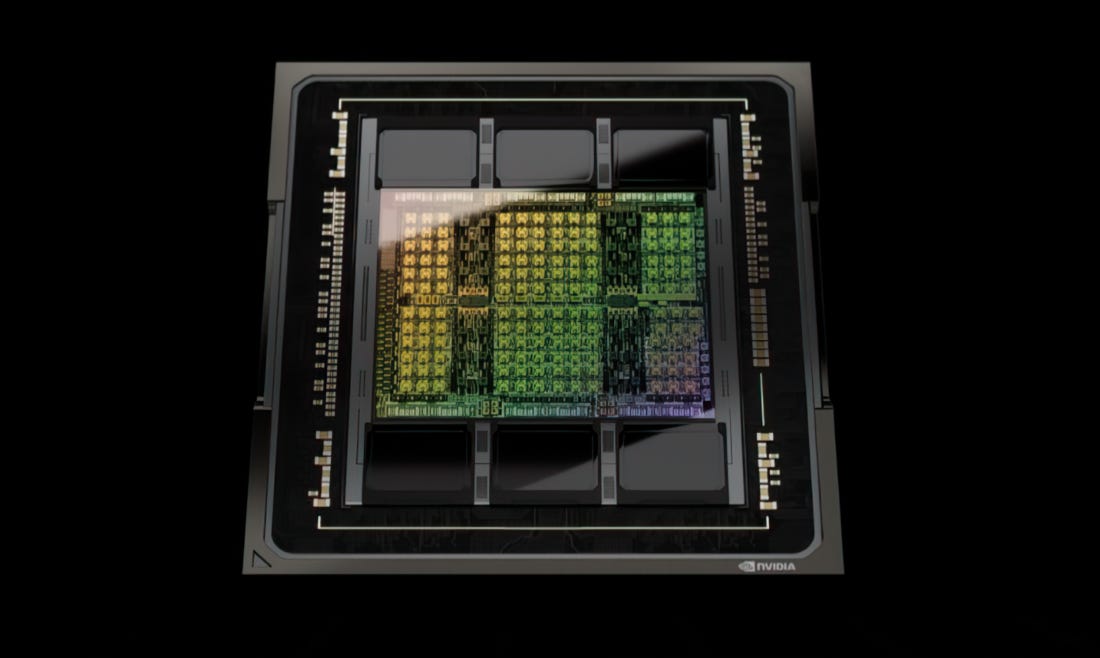

Blackwell has 208 billion transistors, a small line between the two dies, 10Tb/sec of data per die, there is no memory locality or cache issues, one giant chip.

Blackwell offers up to a 30x performance increase, compared with the H100 GPU for LLM inference workloads while, most importantly, using up to 25x less energy.

The two systems where this new Blackwell goes is very similar to what we got used to with Hopper: they can be mixed and matched seamlessly.

Given that there are many installations with Hopper in them, they can be seamlessly upgraded in the HGX configuration.

The Blackwell GPU will be available as a standalone GPU, or two Blackwell GPUs can be combined and paired with Nvidia’s Grace CPU to create the GB200 Superchip.

The second system is as shown in the picture below: the smaller one is with the Grace CPU, memory coherent.

In order to connect all these GPU chips, they also created the NVLink Switch Chip:

This is the NVLink, which itself has 50bn transistors, the same as the H100. Four of the NVLinks packaged together in one chip, will allow every GPU to talk to every other GPU in the datacentre at full speed. Each of the NVLinks are at 1.8TB/sec each.

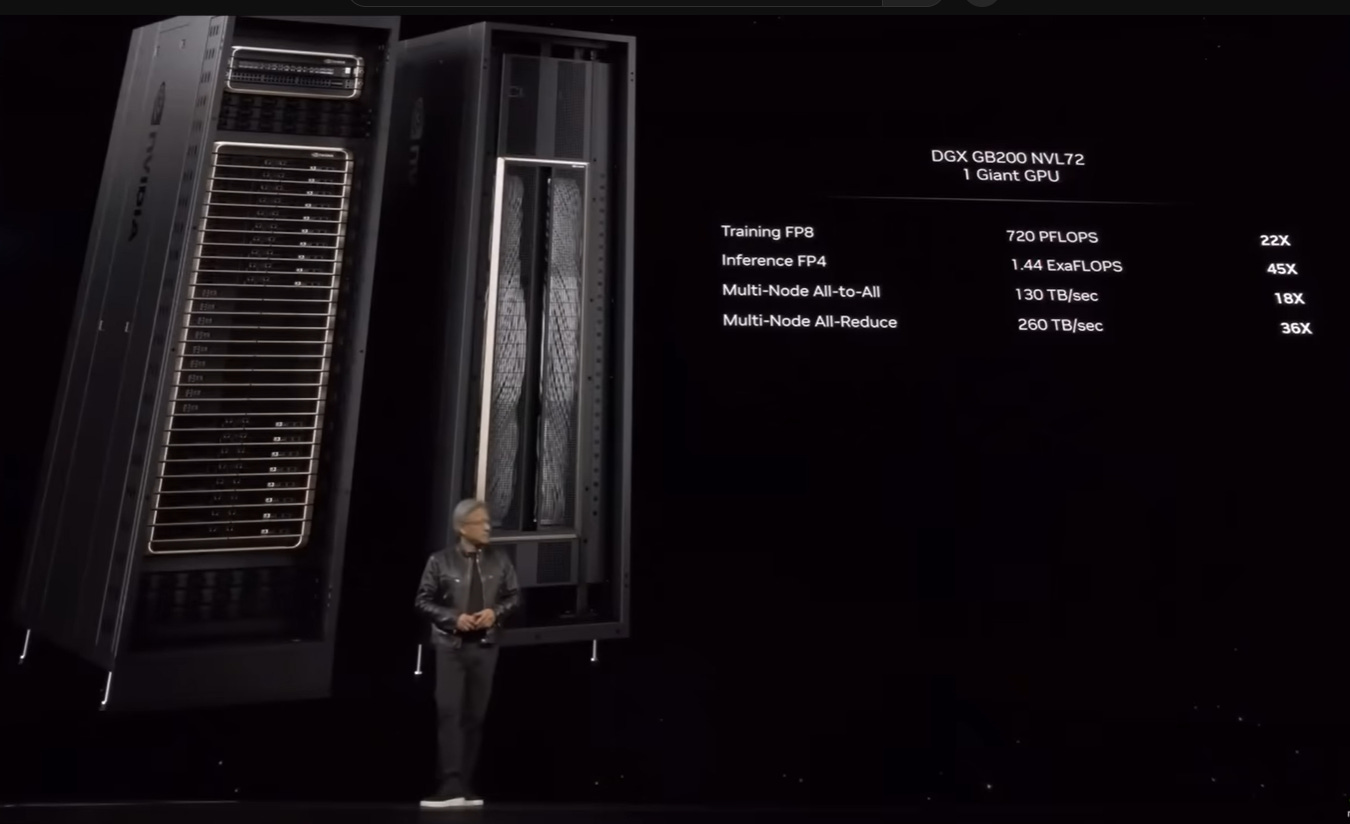

Combining the two technologies, a DGX will reach exaflop-level AI systems in a single rack.

The DGX SuperPOD is made up of eight or more GB200 systems. It has 36 Grace Blackwell 200 Superchips paired to run as a single computer. Nvidia says customers can scale up the SuperPOD to support tens of thousands of GB200 Superchips depending on their needs.

The DGX SuperPOD features liquid-cooled rack-scale architecture, which means the system is cooled off using fluid circulating through a series of pipes and radiators rather than fan-based air cooling, which can be less efficient and more energy intensive. Huang mentioned 2 litres a second will flow through the system. Water flows in at room temperature and flows out at the temperature of sauna water.

Most of the providers out there are getting into this new iteration of Nvidia GPUs. Now that we are well into the A100 > H100 transition, this B200 new model will help the big players plan how they go about the next step.

They also announced a service called NIM. This is a software that contains a large number of pre-trained models with the appropriate CUDA software. Everybody will be able to download it from Nvidia and run it on to their data to create their own LLMs. In this way, they believe Nvidia will come the dominant AI Foundry, in the same way as TSMC is the dominant advanced chip foundry.

People say it is only a question of time before Intel and AMD develop new chips to more effectively challenge Nvidia’s GPUs. That may be the case. However, this talk showed that Nvidia is not standing still and is developing more cutting-edge technology at an astonishing pace.

I’ll discuss what this means for biotechnology after the paywall.